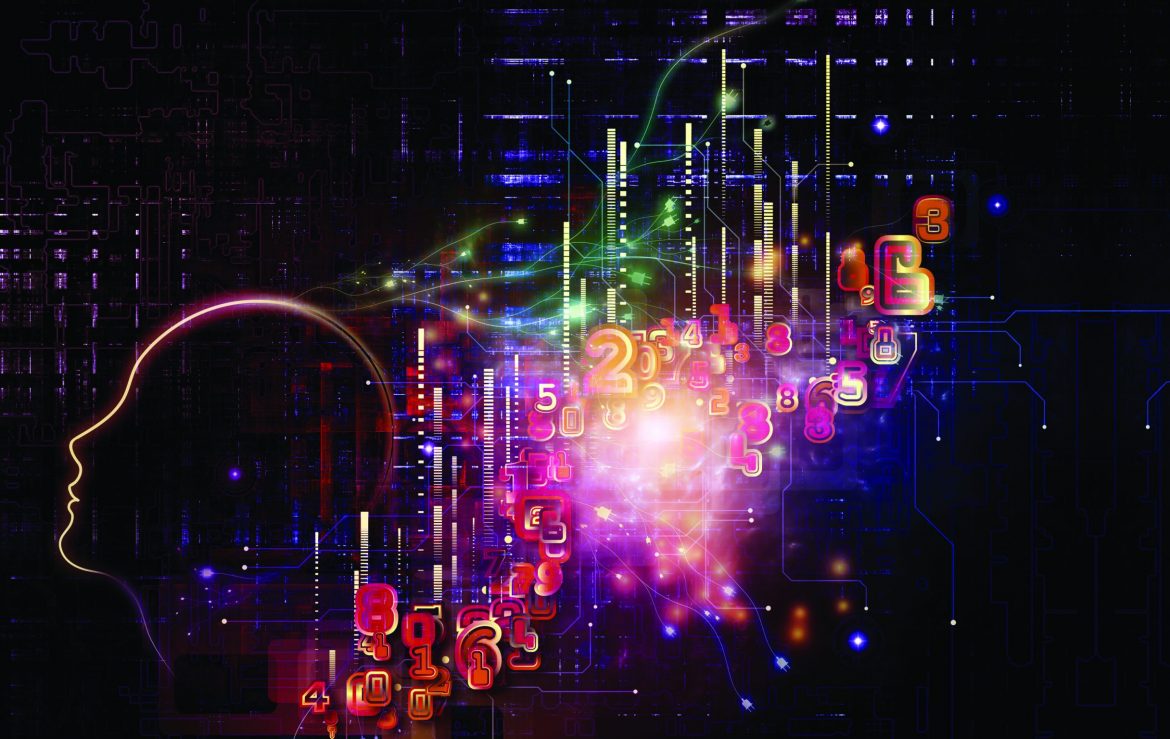

The human brain is often depicted as the most important battlespace of our times, the Information Age. Arguably, the brain, the “cognitive dimension”, has always been the front and center in conflict. After all, the ultimate goal of any military operation at any point in time is changing the behavior of the adversary. In some situations, spreading pamphlets has a more long-lasting effect than dropping bombs. As we currently live in a global geopolitical setting of strategic competition “short of war”, such non-lethal information warfare is in high demand.

This article looks at the practice of trying to influence individuals or groups through online means with the aim of destabilizing a society or “shaping” the ground for a potential future conflict. It deals with a way influence campaigns can be effective, the current and future methods and techniques being deployed and the challenges that this type of non-physical ways of fighting pose to states and the task of a military organization to safeguard security.

Information: weapons of mass disruption?

It is often said that the party which controls the information and thus the narrative, controls the battlefield. Of no lesser importance is that that party will also control the way history is ultimately being written and remembered. As the stakes are high, fighting with information is as old as conflict itself. However, in the last couple of years we have witnessed a revolutionary change in the methods and instruments deployed. New and innovative techniques to “weaponize information” have become in vogue and are increasingly effective due to a change in the way people currently search and find information.

In our world of hyperconnectivity, borderless communications and poorly regulated social media spaces, (dis)information, deception and manipulation have become more scalable, less traceable and as a result, more disruptive. The possibilities to use (and abuse) online information for political and military objectives have grown exponentially and will continue to do so in the near future, bringing new challenges. They have the potential not only of “nudging” the behavior of an individual, but of large parts of a society. The dynamics of influencing campaigns can be illustrated with a reference to the well-known military concept of the “OODA-loop” (Observe, Orient, Decide, Act). With an influencing campaign, an actor targets the first two “O”s, namely what an individual or a group observes and how it orients itself, meaning how it interprets what is being observed. Based on orientation, an actor will make decisions and act accordingly. Manipulation of information used for the understanding of a situation can therefore trick the adversary into an unwanted decision or act.

The use of non-lethal, powerful weapons such as information has in particular been a preferred strategy of those states and non-state actors interested in challenging a status quo. For long unable to compete with the United States at the level of military hard power, countries such as Russia and China have studied American society (and others) well and have found, and targeted “weaknesses” of non-military kind. For the US (as for Europe for example), those “weaknesses” were primarily found in the openness of society, the liberal nature of the media landscape, the right to express oneself freely and in a latent but growing, national and international distrust in the ultimate goal of authorities and elites. On these societal grounds, manipulated information and conspiracy theories could relatively easily be disseminated to reach those susceptible to it. The messages directed to these groups and individuals are often aimed at delegitimizing the overall power and the entire value system of a state, including its domestic and foreign political and where relevant military actions.

Evolving methods and techniques

What makes mastering information warfare nowadays ever more urgent is that its methods and techniques are tied up with technological development and innovations. The pace of change is significant and so ideally, states and military organizations have not only a good understanding of what happens today and what happened yesterday, but also of what will happen tomorrow.

Currently a lot of time and resources are being spent on the creation of “news”, on new online websites, on social media and on platforms such as You Tube, with the objective of tweaking facts to fit an overarching, often plausible narrative.

Information is being disseminated faster and on a larger scale. Another method behind large (dis)information campaigns is that of using troll factories, employing large numbers of people posting online comments under a fake profile and by deploying so called “professional trolls”, individuals that are paid large sums of money to become influencers or opinion agents. In the future, this practice could partly be further automated, which would add another level of scale. The other well-known method is that of using bots, which are programmed to send out messages automatically when for example triggered by a set of keywords.

While large in quantity, they remain rather superficial and are not necessarily credible as they cannot yet interact convincingly with human beings. That is nonetheless likely to change in the future, as computers learn to read the emotions of those they interact with.

Current practices are still rather labor-intensive and need human involvement at almost every stage. Also, with some serious investigation, with the current practices, fakes can still be distinguished from original or real content, even though verification often takes so much time that the damage has already been done.

The near future will bring some additional, serious challenges, in particular with regard to authentication and attribution. As machine-learning and other artificial intelligence (AI) technologies are developing slowly but surely, the first arena in which these technologies will likely start to become a serious additional risk is in the production of synthetic, online media, also known as “deep fakes”.

New solutions using “Generative Adversarial Networks” (GANs) are soon expected to create hyper realistic online content (audio, video, images), with the software available to really anyone. The fabrications are expected to be so realistic, that it will be close to impossible to distinguish fake from original and real content, even with painstaking means of investigation by humans or by machines. Who made the fabrication might also never be discovered.

Information warfare, security and the armed forces

In most of the world’s societies, pictures, audio and video are still perceived as evidence of reality, blurring the line between real and fake even further will therefore create a serious issue. Deep fakes, spread at a massive scale through social media and other internet sites can lead to societal destabilization (but perhaps also to stabilization in other situations).

In military operations, the idea of “deep fake” orders by a respected commander can evidently lead to a very dangerous situation. “Deep fakes” could be employed to circumvent authentication, for example when biometric recognition applications are used to verify identities and documents online. Another problematic example is the construction of false evidence, for example by manipulating satellite images. Proving that something is actually real against accusations of being fake will similarly become an issue.

New opportunities in the information environment have thus fundamentally changed the current threat environment. All over the world, military organizations will be caught up in an extremely densely connected and contested information space, with conflicting narratives and blurring lines between real and fabricated.

To cope in this environment and keep executing its tasks, the armed forces, like others in society, will need to increase the relevant knowledge and expertise and start incorporating many more insights from social-psychology, neurobiology and the world of advertisement. It needs to find ways to deal with possible scenarios of non-attribution of hostile attacks and with the growing upcoming challenges of authentication.

Countries such as Russia and China have certainly had a first mover advantage in the information space. Western and other militaries are catching up. But in many military settings, there is strong reluctance to incorporating such “non-lethal” fighting modes, given the traditional military focus on physical power and lethal capabilities. Also, in some countries, fighting with (dis)information and other means of deception are seen as deeply dishonorable and, in some places, national parliaments might be highly opposed to such practices. But despite these objections and concerns, adding information warfare to the military capability mix is gathering steam. The US, in 2018, adopted the Department of Defense Strategy for Operations in the Information Environment and the Joint Concept for Operating in the Information Environment (JCOIE). It also added information as a warfighting function, a move that was mirrored by NATO. In many other military organizations units are being established focusing on information warfare, strategic communication and/or countering influencing operations, including for example in the United Kingdom and in Australia.

At the same time, states realize that the real challenge and solution lies in preparing society. The US defence research agency DARPA might have already spent millions of dollars on a research programme identifying “deep fakes”, but verification will remain time-consuming and will likely not prevent the damage. As a consequence, the focus is also ever strongly on building up resilience, on making communities less susceptible to deception and educating them about the challenges the next generation of AI- enabled disinformation technologies is likely to bring. Not just for the armed forces, but for society as a whole, the urgency to master information warfare is growing.

By: Dr. Saskia van Genugten

(former MoD advisor in the Netherlands, independent consultant on defense and security-related matters and owner of Sqirl Analysis).